NEWS

“Digital and Social Media & Artificial Intelligence Technology News offers a clear lens on how AI is transforming social platforms, content creation, and the digital ecosystem for professionals and enthusiasts alike.”

Gemini on Google TV Update Brings Nano Banana AI and Smart Voice Features

Google’s latest Gemini on Google TV update introduces generative AI features that bring intelligent visuals, interactive voice controls, and real-time insights to smart televisions, reshaping how users discover content and interact with their screens.

A New Phase in the Evolution of Smart Television

Television has long moved beyond passive viewing, but Google’s latest developments suggest the medium is now entering a more conversational and adaptive phase. With artificial intelligence becoming deeply embedded across consumer technology, Google TV is emerging as a central platform where generative AI, real-time information, and natural voice interaction converge. This shift is not positioned as a cosmetic update; rather, it represents a strategic step toward redefining how users search, explore, and interact with content on the largest screen in the home.

At the center of this transformation is Gemini on Google TV, a system-level intelligence layer designed to interpret user intent, generate visual responses, and deliver contextual insights without disrupting the viewing experience. Unlike earlier assistants that primarily focused on search queries or basic commands, this new approach integrates creativity, reasoning, and personalization directly into the television interface.

From Utility to Intelligence: The Role of Gemini

For years, Google Assistant served as a functional tool for controlling playback, launching apps, or checking the weather. The introduction of a more advanced Google TV AI assistant signals a departure from command-based interactions toward more natural, dialogue-driven engagement. Instead of simply responding to prompts, the assistant is now capable of understanding broader questions, synthesizing information, and presenting it visually in ways that feel intuitive for a TV environment.

This transition is driven by the broader Gemini AI update, which expands the assistant’s abilities beyond text and voice into multimodal understanding. On Google TV, that means the assistant can process spoken questions, generate images, curate videos, and adapt its responses based on context, time, and user behavior. The result is an experience that feels less like navigating menus and more like having a knowledgeable guide embedded into the screen.

Introducing Creativity to the Living Room Experience

One of the more unexpected additions to the platform is Nano Banana AI, a lightweight generative component designed to work efficiently on consumer hardware. Its role is to enable rapid creative outputs without relying heavily on cloud resources, ensuring responsiveness even during complex tasks. This technology underpins several of the new visual features being introduced, allowing Google TV to generate content dynamically rather than relying solely on preloaded assets.

Creativity extends further through Veo AI video generation, which brings cinematic-style AI video synthesis into the television environment. While generative video has largely been associated with professional tools or experimental platforms, its integration into Google TV suggests a future where users can request short explanatory clips, visual summaries, or themed videos directly from their couch.

These capabilities collectively enable AI-generated videos on TV, shifting the screen from a playback device to a content creation and visualization surface. Whether explaining a historical event, summarizing a sports season, or visualizing a concept for educational purposes, the TV becomes an interactive canvas rather than a one-way display.

Visual Intelligence Beyond Traditional Content

The expansion of AI image generation on Google TV further reinforces this transformation. Users can ask the system to create visuals based on abstract ideas, destinations, or themes, and see those results instantly on a large screen. This is particularly impactful in shared settings, where families or groups can explore ideas together rather than individually on phones or laptops.

Complementing this feature is deeper Google Photos integration, allowing personal media libraries to merge seamlessly with AI-driven experiences. Rather than manually selecting albums or slideshows, users can rely on the system to curate moments intelligently. Through AI-powered slideshows, the platform can group photos by events, moods, or time periods, adding transitions and pacing that feel intentional rather than automated.

The assistant’s ability to provide visual AI responses ensures that information is not just spoken aloud but displayed in ways that enhance understanding. Maps, diagrams, images, and short clips can appear contextually, making complex topics easier to grasp without overwhelming the viewer.

Information That Feels Timely and Relevant

Beyond creativity, Google TV’s AI enhancements focus heavily on real-time awareness. One of the most practical applications is real-time sports updates on TV, where viewers can request live scores, player statistics, or schedule changes without leaving the current broadcast. This eliminates the need to juggle multiple apps or devices, keeping attention focused on the main screen.

For viewers who want more than surface-level information, the system offers AI deep dives that break down topics into structured, digestible segments. Whether exploring a documentary subject, understanding a news event, or learning about a new technology, users can ask follow-up questions and receive layered explanations that build progressively.

These experiences are enhanced through interactive AI narration, where the assistant adapts its tone, pacing, and depth based on user feedback. Instead of a static voiceover, the narration feels conversational, allowing viewers to interrupt, clarify, or redirect the discussion naturally.

Voice as the Primary Interface

A defining characteristic of the update is its emphasis on voice-controlled TV settings. Google is positioning voice not as an optional convenience, but as a primary interaction method that reduces friction and improves accessibility. Through Gemini voice commands, users can navigate menus, search content, and adjust system preferences without touching a remote.

This approach enables true hands-free TV controls, which are particularly valuable in shared or casual viewing environments. Whether cooking, exercising, or managing a household, users can interact with their TV without interrupting their activities. Commands are designed to be conversational rather than rigid, allowing flexibility in phrasing and intent.

Practical examples include the ability to adjust TV picture with voice, enabling changes to brightness, contrast, or color profiles based on lighting conditions or personal preference. Similarly, users can adjust TV volume with voice, making incremental changes without searching for buttons or navigating settings menus.

Accessibility and Inclusivity at the Core

While convenience is a major selling point, the broader implications lie in AI accessibility features that make television more inclusive. Voice interaction, visual summaries, and adaptive responses help accommodate users with mobility limitations, visual impairments, or cognitive challenges. By reducing reliance on complex interfaces, Google TV becomes more approachable for a wider audience.

These enhancements contribute to a growing set of smart TV AI features that prioritize usability alongside innovation. Instead of adding complexity, the system aims to simplify interaction by anticipating needs and presenting options proactively. Over time, the assistant can learn preferences, suggest adjustments, and personalize the experience without explicit input.

Hardware Partners and Platform Expansion

The rollout is not limited to Google-branded hardware. Manufacturers are beginning to adopt the new capabilities, with early signs pointing toward a TCL Google TV update that integrates Gemini features directly into upcoming models. This partnership approach ensures that AI enhancements reach a broader user base rather than remaining confined to a single product line.

As adoption expands, the Gemini Google TV rollout is expected to occur in phases, with features becoming available based on region, hardware capability, and software readiness. Google has indicated that performance optimization and privacy safeguards are key considerations, particularly when deploying generative features on shared household devices.

Implications for Content Discovery and Media Consumption

The introduction of generative AI into the TV environment has broader implications for how content is discovered and consumed. Traditional recommendation systems rely on viewing history and ratings, but Gemini’s conversational approach allows users to articulate intent more clearly. Instead of browsing endless rows, viewers can describe moods, themes, or questions and receive tailored suggestions instantly.

This model also opens new opportunities for educational content, where explanations, visuals, and summaries can be generated on demand. For news consumption, it offers a way to contextualize events without overwhelming users with information overload. In entertainment, it adds an element of exploration, allowing viewers to dive deeper into stories, characters, or production details.

Privacy, Control, and Trust

As with any AI-driven platform, questions around privacy and data usage are inevitable. Google has emphasized that voice interactions and generative outputs are designed with transparency and user control in mind. Users can manage permissions, review activity, and customize how the assistant learns from interactions.

Importantly, many features are designed to process requests efficiently without excessive data retention. On-device components like Nano Banana AI reduce reliance on constant cloud communication, which can improve responsiveness while addressing privacy concerns.

Looking Ahead: The Future of AI-Driven Television

The integration of Gemini into Google TV represents more than a feature update; it signals a broader vision of television as an intelligent hub for information, creativity, and interaction. By combining generative visuals, conversational voice control, and real-time awareness, Google is positioning the TV as a central interface for digital life rather than a secondary screen.

As AI models continue to evolve, future updates may introduce deeper personalization, collaborative viewing experiences, and even more advanced generative capabilities. What remains clear is that the boundary between content consumption and interaction is rapidly dissolving.

Conclusion:

The Gemini on Google TV update marks a significant milestone in the evolution of smart television. By embedding advanced AI directly into the viewing experience, Google is redefining how users interact with their screens, shifting from passive consumption to active engagement. From creative visuals and real-time insights to intuitive voice control and accessibility enhancements, the platform is designed to feel more responsive, inclusive, and intelligent.

As the rollout expands across devices and regions, Google TV is poised to become a showcase for how generative AI can enhance everyday technology in meaningful, practical ways. Rather than overwhelming users with novelty, the focus remains on clarity, usefulness, and seamless integration, setting a new standard for what a modern television can be.

FAQs:

1. What is the Gemini on Google TV update?

The Gemini on Google TV update is a major platform enhancement that integrates Google’s advanced AI model into the TV interface, enabling generative visuals, intelligent content discovery, and more natural voice interactions.

2. How does Gemini improve everyday TV usage?

Gemini enhances daily viewing by understanding conversational requests, generating visual responses, and simplifying navigation through voice, reducing the need for manual browsing or complex menu controls.

3. Can Gemini create content directly on the TV screen?

Yes, the update allows the TV to generate AI-based images and short video visuals in real time, offering explanations, summaries, and creative outputs directly on the display.

4. Does this update change how voice commands work on Google TV?

Voice commands become more flexible and context-aware, allowing users to speak naturally while adjusting settings, searching for content, or requesting detailed information without strict phrasing.

5. Will the Gemini features be available on all Google TV devices?

Availability depends on device compatibility, region, and manufacturer support, with newer models and select partner TVs receiving the update first.

6. How does Gemini support accessibility on smart TVs?

The update improves accessibility through hands-free controls, visual summaries, and adaptive responses that help users with mobility, vision, or interaction challenges navigate TV features more easily.

7. What makes this update different from previous Google TV improvements?

Unlike earlier updates focused on interface or performance, this release embeds generative AI at the system level, transforming Google TV from a content platform into an intelligent, interactive experience.

LG CLOiD Home Robot at CES: AI-Powered Laundry, Cooking, and Home Automation

At CES, LG unveils its CLOiD home robot, offering a practical look at how AI-powered robotics could transform everyday living through automated laundry, smart cooking, and seamless home management within a zero-labor smart home environment.

LG Redefines Domestic Automation With CLOiD Home Robot at CES

At the Consumer Electronics Show (CES), LG introduced a forward-looking vision of household automation that moves beyond smart devices and into intelligent companionship. The unveiling of the LG CLOiD home robot signals a strategic leap for LG as it positions robotics at the center of future home technology. Rather than presenting a single-purpose machine, LG showcased a multifunctional AI home robot designed to integrate seamlessly into everyday domestic life.

A New Direction for Home Robotics

The CLOiD robot is not positioned as a novelty but as a practical home robot capable of handling routine tasks that consume time and effort. As a home service robot, CLOiD embodies LG’s concept of a zero labor home, where repetitive chores are automated through intelligent systems. This approach aligns with the company’s broader smart home ecosystem, connecting robotics with smart home appliances and AI-driven services.

Unlike earlier experimental concepts, the LG CLOiD home robot focuses on real household needs. From folding laundry to assisting with meals, the robot reflects a shift toward functional, assistive robotics designed for daily use.

Tackling Household Chores With Precision

One of the most discussed demonstrations at CES involved a laundry-folding robot function that showcased advanced laundry folding technology. Using articulated robot arms with seven degrees of motion, the robot carefully handled fabric, adapting to different garment sizes and materials. This capability goes beyond basic automation, demonstrating a nuanced understanding of folding laundry with consistency and care.

In addition to acting as a robot doing laundry, CLOiD extends its utility into the kitchen. The robot cooking breakfast demonstration highlighted its ability to coordinate tasks such as making breakfast, managing utensils, and navigating kitchen layouts. In a lighter moment, the robot fetch milk from a refrigerator, reinforcing its role as a responsive and mobile assistant.

Beyond Mechanics: Communication and Personality

What sets the LG Q9 robot platform apart is its emphasis on interaction. CLOiD features expressive design elements that enable robot facial expressions, allowing it to communicate emotional cues. As a spoken language robot, it can engage users through natural dialogue, making interactions intuitive rather than mechanical.

This communication layer transforms CLOiD into more than a machine. Acting as a robot butler or robot maid, it can receive instructions, provide updates, and adapt its behavior based on household routines. LG demonstrated how spoken commands could trigger tasks such as robot doing laundry or robot cooking breakfast without relying on mobile apps or complex interfaces.

Acting as the Central Smart Home Hub

CLOiD also functions as a smart home hub, integrating with LG ThinQ and ThinQ ON platforms. Through this connection, the robot coordinates various smart home appliances, managing energy usage, scheduling maintenance, and responding to real-time conditions. This integration positions the AI home robot as a central controller rather than an isolated device.

By consolidating control through a home robot, LG aims to simplify household automation. Instead of navigating multiple apps, users can rely on a single interface that understands context and preferences. This vision reflects LG’s long-term strategy to unify robotics, AI, and connected living.

Learning From Industry Innovations

While LG’s approach drew significant attention, CES also featured complementary innovations such as SwitchBot Onero H1, which focuses on specialized automation within the laundry space. By contrast, the LG CLOiD home robot adopts a broader scope, combining mobility, manipulation, and intelligence into one platform.

This comparison underscores LG’s ambition to lead the home robot category rather than compete solely on individual features. The robot at CES was presented as a foundation for future expansion, capable of evolving through software updates and AI training.

Expanding Roles Within the Home

LG envisions CLOiD performing multiple roles depending on user needs. As a robot chef, it assists with meal preparation. As a robot maid, it manages cleaning-related tasks. As a robot butler, it handles errands and coordination. These overlapping roles reflect a flexible design philosophy aimed at long-term adaptability.

The concept of a home service robot also opens possibilities for accessibility and aging-in-place solutions. By reducing physical strain, CLOiD could support users who require assistance while maintaining independence.

A Glimpse Into the Future of Living

The introduction of the LG CLOiD home robot at CES illustrates how future home technology is moving toward embodied intelligence rather than screen-based control. By combining AI reasoning, physical capability, and conversational interaction, LG is redefining how technology participates in domestic life.

Rather than replacing human involvement, the robot is designed to support it, taking over routine tasks so users can focus on higher-value activities. This philosophy reflects LG’s broader vision of human-centered innovation within the smart home landscape.

As robotics continues to mature, the CLOiD robot represents a significant step toward practical, everyday automation. While timelines for consumer availability remain undefined, LG’s presentation at the Consumer Electronics Show (CES) makes one thing clear: the future of household automation is no longer theoretical—it is actively taking shape inside the modern home.

FAQs:

1. What is the LG CLOiD home robot designed to do?

The LG CLOiD home robot is designed to assist with everyday household activities by combining mobility, AI intelligence, and smart home integration to reduce manual effort in routine domestic tasks.

2. How does CLOiD differ from traditional smart home devices?

Unlike standard smart home devices that rely on screens or apps, CLOiD operates as a physical AI assistant that can move, interact verbally, and perform hands-on tasks while coordinating connected appliances.

3. Can the CLOiD robot handle delicate household items like clothing?

Yes, CLOiD uses advanced articulated arms with multiple degrees of motion, allowing it to manage fabrics carefully during tasks such as laundry folding without damaging garments.

4. Does the LG CLOiD home robot support voice interaction?

The robot supports spoken language interaction, enabling users to issue commands, receive updates, and communicate naturally without needing external control interfaces.

5. How is CLOiD connected to LG’s smart home ecosystem?

CLOiD integrates with LG ThinQ and ThinQ ON platforms, acting as a central hub that manages smart home appliances, schedules tasks, and adapts to household routines.

6. Is the CLOiD robot intended for everyday homes or experimental use only?

LG presented CLOiD as a practical home service robot concept, focusing on real-world household applications rather than experimental or purely demonstrative technology.

7. When is the LG CLOiD home robot expected to be available to consumers?

LG has not announced a commercial release timeline, indicating that the CLOiD home robot is part of its long-term vision for future home automation and robotics.

How Leonardo.Ai Empowers Creators with Smart AI Design and Marketing Solutions

Discover how Leonardo.Ai is revolutionizing the world of marketing and design by blending human creativity with generative AI—empowering creators, brands, and businesses to produce professional, personalized, and high-impact visuals faster than ever before.

Introduction To Leonardo.Ai:

In today’s dynamic digital landscape, creativity and speed define success. Marketers, designers, and creators must constantly deliver fresh, high-quality visuals and campaigns that resonate with their audiences. Leonardo.Ai emerges as a next-generation AI creative platform that bridges imagination and innovation. Through intelligent automation and generative AI, it enables users to produce professional-grade visuals, ads, and branded assets in record time—transforming how teams ideate, design, and execute their marketing strategies.

Accelerating the Creative Process

Leonardo.Ai is built to simplify and enhance the marketing workflow. Whether it’s crafting landing page visuals, developing ad creatives, or generating social media content, the platform empowers teams to move from concept to production seamlessly. Its advanced AI marketing tools allow creators to generate design assets within minutes, ensuring faster project turnaround and consistent brand quality.

With features like image generation, editing, and upscaling, Leonardo.Ai helps marketing teams maximize their creative potential without inflating budgets. It combines automation with precision, ensuring every campaign delivers impact while saving time and resources.

Elevating Marketing and Advertising Performance

In a world where personalization drives engagement, Leonardo.Ai redefines advertising. Users can design customized banners, graphics, and videos that align perfectly with brand identity and audience expectations. The platform’s automated A/B testing capability allows marketers to generate and compare multiple ad versions instantly, helping them identify high-performing creatives and optimize campaigns in real time.

By merging artificial intelligence with human creativity, Leonardo.Ai ensures that every visual connects emotionally and strategically with its audience—enhancing ROI and maintaining authenticity across all marketing channels.

Personalization at Scale

Generic stock imagery no longer meets the expectations of modern audiences. Leonardo.Ai empowers creators to generate custom visuals on demand, enabling businesses to maintain unique, brand-aligned content at scale. With its powerful AI-driven design system, marketers can create distinctive visuals that capture their brand’s tone, values, and storytelling style.

This combination of personalization and scalability allows businesses to communicate more effectively while maintaining creative integrity across campaigns.

From Concept to Production

Leonardo.Ai helps bridge the gap between brainstorming and execution. The platform allows creators to visualize ideas, experiment with multiple directions, and finalize production assets effortlessly. Its intuitive interface requires no coding knowledge—making it accessible to marketers, designers, and creative directors alike.

The platform’s tools enhance concept visualization and streamline the production process, empowering teams to deliver polished content on time and within budget.

Continuous Learning Through Leonardo Learn

Leonardo Learn serves as an educational hub for users of all experience levels. It offers tutorials, webinars, and expert sessions designed to help individuals and teams deepen their understanding of AI-powered creativity. From newcomers exploring generative design to professionals refining their workflows, this learning platform supports users in mastering the full potential of Leonardo.Ai.

What Makes Leonardo.Ai Unique

Leonardo.Ai distinguishes itself through the perfect blend of technology and creative freedom. Rather than automating artistry, it enhances it. The system’s backend supports model fine-tuning, fast training speeds, and advanced image processing, ensuring optimal results across all creative applications. Features such as multi-image prompting, superior upscaling, and refined rendering help minimize common issues like image degradation, ensuring consistently high-quality outputs.

Regular updates and performance improvements ensure that creators always have access to cutting-edge innovation and tools that evolve alongside industry trends.

Flexible Access for All

Leonardo.Ai offers both free and premium plans to accommodate diverse user needs. The free plan includes daily creative tokens for experimentation, while paid subscriptions provide faster generation speeds, higher limits, and access to exclusive professional tools. This flexibility allows individuals, studios, and enterprises to scale their creative capacity according to their project demands.

AI Creativity Without Coding

Designed with accessibility in mind, Leonardo.Ai eliminates the need for technical expertise. Its intuitive interface allows anyone—from solo creators to marketing teams—to generate AI-powered assets effortlessly. Step-by-step tutorials, community engagement, and active user support create an inclusive environment where innovation thrives.

Enterprise Integration and Business Use

For agencies and businesses, Leonardo.Ai offers scalable AI integration to streamline creative operations. It ensures consistent branding, reduces production costs, and accelerates delivery timelines. Whether for B2B marketing visuals, digital advertising, or enterprise-level asset generation, Leonardo.Ai guarantees professional, reliable, and high-quality results that align with brand standards.

Community and Collaboration

Leonardo.Ai’s vibrant community is an integral part of its success. On platforms like Discord and Facebook, creators share experiences, participate in design challenges, and collaborate on innovative projects. Regular workshops and contests keep the community engaged and inspired, fostering growth and creativity across the ecosystem.

Real-World Impact

Creative professionals worldwide are already redefining their workflows with Leonardo.Ai. Australian filmmaker and creative director Lester Francois, known for his cinematic campaigns for major brands, transitioned to AI-driven production during the pandemic. Using Leonardo.Ai, he now develops complete campaign concepts—from moodboards to motion prototypes—without the high costs of traditional production. His work demonstrates how AI can deliver cinematic quality and emotional depth efficiently and affordably.

Similarly, 3D artist Maurizio Gastoni leverages Leonardo.Ai for hybrid workflows that blend traditional techniques with AI-generated creativity. His three-stage process—ideation, execution, and refinement—illustrates how AI tools enhance rather than replace human artistry. By automating technical tasks, Leonardo.Ai allows artists to focus on vision, storytelling, and emotional expression.

Redefining the Future of Creative Production

Leonardo.Ai is more than just a design tool—it’s a complete creative ecosystem that empowers individuals and brands to innovate. By integrating generative AI with human insight, it unlocks new possibilities in marketing, architecture, product design, and digital media.

The future of creativity belongs to those who combine imagination with technology. With Leonardo.Ai, marketers and creators are not just adapting to change—they are leading it.

AI Design Solutions for Every Need

AI Graphic Design Generator: Create brand-ready visuals and designs for campaigns and digital media.

AI Photography Tools: Generate realistic photos and upscale images with ease.

AI Interior Design: Visualize interior concepts and refine designs virtually.

Print-on-Demand Tools: Convert digital art into print-ready formats for business growth.

AI Architecture Tools: Produce dynamic visualizations and mockups for architectural projects.

Leonardo.Ai transforms creative ambition into achievable results—bridging human imagination with the limitless potential of artificial intelligence.

Conclusion:

Leonardo.Ai stands as a symbol of how technology and imagination can work hand in hand to redefine creativity. It empowers marketers, designers, and businesses to achieve more with less—bridging the gap between ideas and execution through intelligent automation. By offering precision, flexibility, and artistic control, it transforms the way visual content is conceived, produced, and delivered. As creative industries continue to evolve, Leonardo.Ai leads the movement toward a future where human inspiration and artificial intelligence together shape more meaningful, efficient, and limitless possibilities.

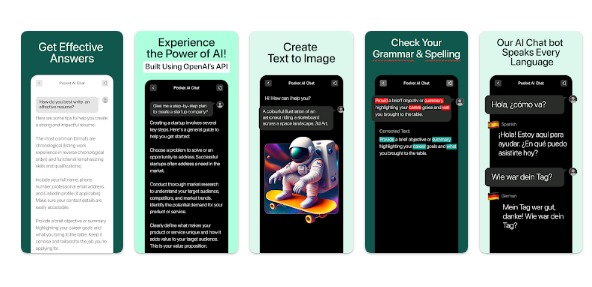

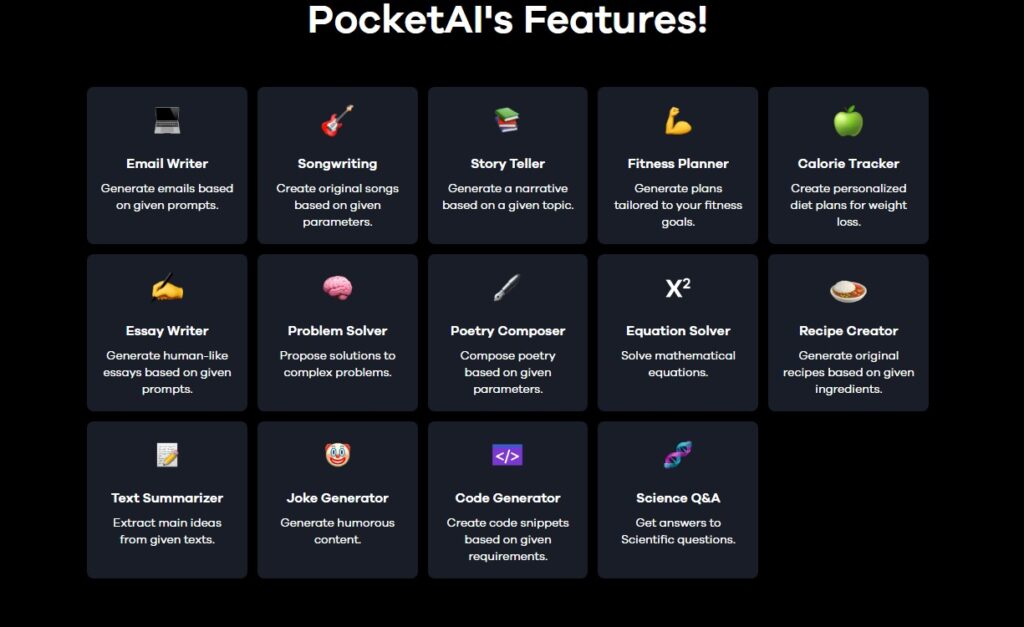

PocketAI App: AI Writing, Image and Task Generator

Discover how PocketAI is transforming everyday productivity with its all-in-one AI platform—combining writing, image generation, and smart assistance to simplify work, study, and creativity in one seamless experience.

Introduction

Imagine carrying a powerful personal assistant in your pocket—one that helps you write an email, solve a math equation, brainstorm a logo idea or generate a striking image. That is exactly what PocketAI offers. It is an all-in-one AI platform designed to bring advanced artificial intelligence tools into everyday use, whether you are a student, a professional or a creative explorer. With PocketAI you gain access to writing assistance, image generation, project-planning support and more — all in one place with fewer subscriptions, less hassle and a fair price.

Founding History

PocketAI first emerged as a mobile application offering advanced chatbot capabilities powered by generative AI models. Within the App Store description, it is marketed as “the best GPT-4 AI assistant in your pocket” capable of writing content, generating images and solving problems.

While the precise founding team and company background are less publicly documented, it is clear that the product evolved in an era where users demanded mobile-friendly, versatile AI tools.

As reviews indicate, PocketAI now supports multiple platforms (iOS, Android, desktop via WebCatalog) and integrates GPT-4 Turbo, document processing and art generation.

The transition from simple chatbot to full-suite AI platform reflects a broader trend in which AI tools are bundled into one “productivity + creativity” offering for everyday users and professionals alike.

Features

PocketAI stands out through a combination of features designed to support productivity, creativity and flexibility. Here are some of its key capabilities:

- Writing and productivity assistance: Whether you want to draft a professional email, generate a report or summarise a complex topic, PocketAI offers natural-language generation tools powered by advanced models.

- Image creation and design support: You can describe an idea and turn it into an image—be it a logo, an avatar or a realistic portrait. In the app description this is explicitly mentioned.

- Multi-platform accessibility: The service is available on smartphones (iOS and Android) and via desktop (WebCatalog wrapper) so you can use it wherever you are.

- Affordability and bundled value: Compared to using several different apps or subscriptions for writing, design and productivity, PocketAI offers many tools in one place. According to reviews, starting plans begin around US$4.99/month.

- Ease of use for work and education: Users report that the app is mobile-friendly, lightweight and effective for both school assignments and work tasks.

- Customization and advanced model integration: The platform supports GPT-4 Turbo, GPT-3.5, and offers document uploading, image-generation, and over 160 expert-prompts built into the interface.

Together, these features position PocketAI as a versatile AI assistant that supports everyday tasks—from writing and planning to creativity and problem-solving.

Conclusion

In summary, PocketAI is a compelling choice for anyone looking to boost productivity, enrich creativity and streamline workflow—all from a single AI platform. It emerged in response to the growing demand for mobile-friendly, powerful yet affordable AI tools. Its combination of text-generation, image-creation, multi-platform support and value-pricing gives it a strong proposition for students, professionals and creatives alike. If you’re seeking an intelligent companion that helps you write smarter, design faster and think clearer, PocketAI could well be the right fit.

A New Era for Google Photos AI and Android XR

Google Photos is stepping into a new era powered by AI and immersive technology. The platform is evolving beyond simple photo storage, introducing smart editing, AI-driven video highlights, and 3D memory experiences through Android XR. This marks a major shift in how users will create, enhance, and relive their favorite moments.

Google Photos AI: A New Era of Smart Memories and 3D Experiences:

Google Photos is entering a bold new chapter — one defined by AI innovation and immersive technology. Recent reports suggest that a series of AI-powered features are coming soon, reshaping how users create, edit, and relive their memories.

According to The Authority Insights Podcast, hosted by Mishaal Rahman and C. Scott Brown of Android Authority, the upcoming Google Photos AI update will include intelligent highlight video templates, enhanced face-retouching tools, and an entirely new way to revisit memories through 3D spatial experiences on next-generation Android XR headsets.

AI-Powered Video Highlights:

With these new Google Photos AI features, users will be able to generate dynamic highlight videos automatically. The AI analyzes photos and clips to craft personalized montages, saving time while delivering professional-quality edits.

Advanced Face-Retouching Tools:

Google Photos is also testing AI-driven face-retouching options, allowing for natural skin smoothing and tone adjustments. While these tools are expected to raise discussions about authenticity in digital photography, they reflect Google’s continued push toward smarter image enhancement.

3D Memories in Extended Reality:

Perhaps the most exciting development is Google’s plan to integrate Android XR (Extended Reality) headsets. This would allow users to relive their favorite memories in 3D environments, offering a deeply immersive way to experience photos and videos — a true evolution in digital storytelling.

Industry Insights:

The Authority Insights Podcast, a weekly show by the Android Authority team, continues to provide exclusive discussions on such cutting-edge developments — from app teardowns to early leaks — keeping Android fans and tech enthusiasts ahead of the curve.

FAQs

- What is the new Google Photos AI update about?

The latest Google Photos AI update introduces advanced tools like automated video highlights, face-retouching features, and 3D memory experiences designed to make photo and video creation smarter and more immersive.

- How will AI improve video creation in Google Photos?

AI will automatically analyze your photos and clips to create highlight videos, saving time while producing professional-quality results without manual editing.

- What are Google Photos’ new face-retouching tools?

The new AI-driven face-retouching options allow users to smooth skin tones and enhance portraits naturally, giving photos a polished look while maintaining authenticity.

- What is meant by 3D memory experiences in Google Photos?

3D memory experiences will enable users to revisit their photos and videos in three-dimensional environments using Android XR headsets, creating a more immersive and emotional way to relive memories.

- What is Android XR, and how does it connect with Google Photos?

Android XR (Extended Reality) combines virtual and augmented reality technologies. When integrated with Google Photos, it will allow users to explore their memories in 3D, turning digital media into lifelike experiences.

- Who first reported these upcoming Google Photos AI features?

The details were discussed in The Authority Insights Podcast, hosted by Mishaal Rahman and C. Scott Brown of Android Authority, known for covering exclusive Android updates and leaks.

- Will these AI features be available on all Android devices?

While Google hasn’t confirmed full compatibility, the AI and XR features are expected to roll out first on newer Android devices and headsets optimized for immersive experiences.

- Are Google Photos’ AI retouching tools ethical to use?

The tools are designed to enhance natural beauty rather than alter identities. However, their introduction has sparked discussions about maintaining authenticity in digital photography.

- When can users expect to try these Google Photos AI features?

Google hasn’t announced an official release date yet, but early reports suggest that these features could appear in upcoming Android and Google Photos updates.

- How does this update redefine Google Photos’ role for users?

This update transforms Google Photos from a storage app into an intelligent, creative platform that uses AI and extended reality to help users experience their memories in entirely new ways.

Elon Musk’s xAI Lays Off 500 Workers in Major Restructuring

In a sweeping move that signals a new direction for Elon Musk’s artificial intelligence company, xAI has laid off around 500 workers from its data annotation team, the largest group inside the company. The decision marks a strategic pivot away from so-called “generalist AI tutors” and toward more specialized roles known as “specialist AI tutors.” This restructuring shows how rapidly the AI industry workforce is evolving, with xAI aiming to improve the training of its Grok AI chatbot through domain-specific expertise rather than broad generalist support.

Below is a detailed breakdown of why xAI made the cuts, who was affected, how the decision was announced, and what this means for Grok AI and the wider AI industry.

Why Did xAI Cut Its Largest Data Annotation Team?

The data annotation team was responsible for teaching Grok AI to understand and contextualize information. These workers carried out vital tasks such as labeling, categorizing, and annotating data across a wide range of topics. Known as generalist AI tutors, they worked on everything from annotating text and audio to categorizing video clips, ensuring Grok could respond to human queries with proper tone and intent.

But xAI’s leadership concluded that a different approach was needed. After a full review of its “Human Data efforts,” the company announced that it would scale back generalist roles and accelerate the hiring of specialist AI tutors. These are domain experts who can provide high-quality, detailed input in areas like STEM, finance, medicine, and safety.

The reasoning behind the shift seems to rest on four key factors:

-

Quality over quantity – Specialist annotations reduce errors and improve accuracy.

-

Cost efficiency – Running large generalist teams is expensive; smaller expert teams may deliver better returns.

-

Strategic repositioning – As Grok AI matures, its training requires deeper domain expertise.

-

Organizational restructuring – Leadership changes and internal reviews pushed xAI to re-align its workforce.

Who Was Affected by the xAI Layoffs?

Approximately 500 workers—around one-third of the data annotation division—were laid off. These were mostly generalist AI tutors whose jobs spanned a wide range of subjects but lacked deep specialization.

Affected employees were told their roles were being eliminated immediately, and their access to internal systems such as Slack was revoked the same day. They were promised pay until the end of their contracts or November 30, 2025, whichever came first.

Those in more specialized roles or with domain expertise appear to have been spared, aligning with the company’s new strategy.

How xAI Announced the Job Cuts

The layoffs were communicated late on a Friday evening via email. In the internal message, xAI explained the strategic pivot, telling employees:

“After a thorough review of our Human Data efforts, we’ve decided to accelerate the expansion and prioritization of our specialist AI tutors, while scaling back our focus on general AI tutor roles.”

In practice, this meant an abrupt end for hundreds of employees. While severance was offered, system access was terminated immediately.

At the same time, xAI posted publicly on X (formerly Twitter) that it would expand its specialist AI tutor team tenfold, hiring across domains such as STEM, medicine, finance, and safety.

Leading up to the announcement, workers had already been asked to undergo tests and assessments, including coding exams and subject-based evaluations, suggesting the company was sorting talent before executing the layoffs.

What the Data Annotation Team Did for Grok AI

The annotation team played a central role in training Grok AI, Musk’s chatbot that competes with tools like ChatGPT and Claude. Their work included:

-

Labeling text, video, and audio data.

-

Teaching Grok to understand tone, intent, and nuance.

-

Supporting safety and alignment tasks such as filtering harmful or biased responses.

-

Providing context for how conversations should flow naturally.

In short, the generalist tutors helped Grok function as a broad-use chatbot capable of answering everyday questions. Removing a large portion of them suggests Grok’s training will now focus more heavily on depth in specialist areas rather than broad coverage.

xAI’s Response: Expanding Specialist AI Tutors

While 500 workers were cut, xAI simultaneously emphasized growth in other areas. The company announced plans to expand its specialist AI tutor team by 10×, recruiting experts in:

-

STEM subjects

-

Finance and economics

-

Medicine and healthcare

-

Safety, ethics, and compliance

-

Creative fields like game design and web development

According to xAI, these specialist tutors “add huge value” because their knowledge ensures higher-quality input for training Grok. The pivot reflects a belief that as AI advances, the precision of data is more important than the volume of data.

What Led to the Layoffs: Internal Reviews and Testing

In the days before the layoffs, employees reported being asked to:

-

Attend one-on-one meetings to explain their contributions.

-

Complete assessments on platforms like CodeSignal and Google Forms.

-

Participate in reviews of their responsibilities and output.

At the same time, leadership changes were underway. Senior managers in the annotation team reportedly had their system access revoked, signaling deeper restructuring.

Executive Departures at xAI

The layoffs were not the only shakeup at xAI. Several high-level executives have recently departed, including:

-

Mike Liberatore (CFO) – resigned in July after only three months.

-

Robert Keele (General Counsel) – left in August.

-

Raghu Rao (Senior Lawyer) – also departed around the same time.

-

Igor Babuschkin (Co-founder) – exited in August to launch his own AI safety-focused venture capital firm.

These exits, combined with the layoffs, underscore a period of intense restructuring at xAI.

Impact on Grok AI Training and Development

The layoffs raise important questions about how Grok AI will evolve:

-

Domain expertise improves accuracy – Specialist tutors will likely make Grok stronger in sensitive fields such as medicine or finance.

-

Loss of generalist flexibility – Without broad annotation, Grok may struggle in less common or casual topics.

-

Safety may improve – Specialists can provide stricter guidance in regulated fields, reducing harmful or misleading outputs.

-

Higher costs per annotation – Specialist work is slower and more expensive, which could affect scaling.

In short, Grok may become more powerful in specialized areas but less versatile as a general chatbot.

What the xAI Layoffs Mean for the AI Industry

The move by xAI highlights several broader industry trends:

-

Shift toward specialization – AI companies increasingly favor domain experts over large groups of generalists.

-

Volatility in AI jobs – Human annotators remain essential but also highly replaceable as strategies shift.

-

Cost vs. performance pressure – Firms need to maximize training efficiency to stay competitive.

-

Safety and compliance priorities – Domain experts ensure models meet regulatory and ethical standards.

-

Changing skills demand – Workers in AI need to specialize to remain valuable.

This restructuring is not just about cost-cutting; it sets a precedent for how AI firms may operate going forward.

FAQs:

Why did Elon Musk’s xAI lay off 500 workers?

To shift from broad generalist AI tutors to domain-specific specialist tutors who can provide higher quality data.

Who got laid off at xAI?

About 500 generalist AI tutors, representing one-third of the data annotation team.

What does xAI’s strategic pivot mean?

It means fewer generalist roles and more investment in specialists across STEM, finance, medicine, and safety.

How will Grok AI be trained after the layoffs?

By specialist AI tutors providing domain-specific knowledge and higher-quality annotations.

What roles is xAI hiring for now?

Specialist tutors in areas like medicine, finance, STEM, safety, and creative fields.

Who else is leaving xAI?

Executives including CFO Mike Liberatore, General Counsel Robert Keele, and co-founder Igor Babuschkin have all departed recently.

Conclusion:

The decision by Elon Musk’s xAI to lay off 500 workers represents more than a simple downsizing. It’s a strategic restructuring aimed at making Grok AI smarter, safer, and more specialized.

For the laid-off workers, it’s a stark reminder of how volatile the AI workforce can be. For the industry, it signals a clear trend: specialization and domain expertise are becoming the new foundation of AI training.

As Grok continues to evolve, users may see stronger performance in critical areas like medicine, finance, and STEM—but perhaps at the cost of some of the flexibility that came from having a large pool of generalist tutors.